Consider a Fortune 500 logistics company building an internal agent. In the demo, it flawlessly answers, "What is the surcharge for hazardous materials?" The VP approves it.

Three weeks later in production, a sales rep asks, "Can we ship Lithium-Ion batteries to a European warehouse?" The agent's confident affirmation later resulted in the shipment being rejected at the dock and a $15,000 compliance fine.

What has changed now? Why did it fail? The answer lies in the Retrieval Augmented Generation (RAG).

The specific clause about warehouses was buried in a footnote table, separated by a generic page break. The RAG system’s chunking strategy split the rule in half, feeding the agent incomplete context.

But the business stakes are real.

According to a Gartner AI survey, only 48% of AI projects are successfully deployed into production, and those that do take an average of 8 months to bridge the gap from prototype to deployment. Furthermore, 30% of GenAI projects are abandoned entirely after Proof of Concept (POC), with poor data quality cited as the primary culprit by 43% of Chief Data Officers.

Today, the board-level question is shifting from “Can we demo AI?” to “Can we trust AI outputs in production?” For knowledge-heavy workflows (support, compliance, sales engineering, operations, etc.), that trust rests heavily on three controllable levers, namely Chunking, Reranking, and Freshness Decisions.

Let's take a closer look at them.

This Article Contains:

Chunking: Teaching your agent to read

Often, the information you're looking for is buried in a 47-page PDF. What's relevant to you is a sentence or a paragraph. A page, at most. However, you have to find it rigorously in the document.

This problem only exacerbates when you use AI. LLMs like ChatGPT and Claude don't read documents page by page like us. They load it completely in their context window and then look for the relevant info. This is not only expensive, as the needless bits of information still cost you input tokens, but they also significantly limit your capabilities by occupying the context window while degrading your overall performance.

Here, chunking plays a pivotal role by extracting, storing, and retrieving only the relevant bits of information whenever you need.

Chunking is the preprocessing technique of breaking down large datasets or files into smaller, manageable, and meaningful portions. It is critical for improving search accuracy, reducing hallucinations, and overcoming context window limitations.

Let's delve into the types of chunking techniques.

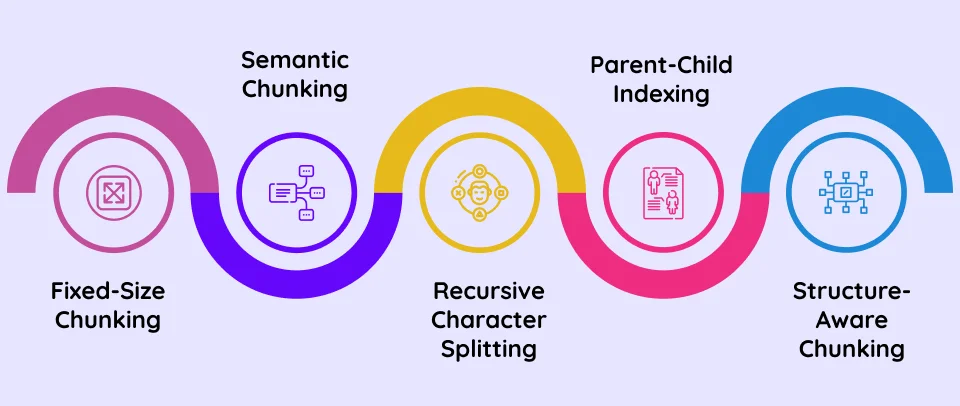

1. Fixed-size chunking

Most out-of-the-box RAG pipelines use fixed-size chunking. This implies splitting documents into arbitrary blocks of 500 or 1,000 tokens, with optional overlap between adjacent chunks (e.g., 10-20%).

The fixed-size chunking approach does not try to understand structure, headings, or meaning. It simply divides content into consistent segments.

For instance, if your 10-page policy document is 5,000 tokens and your chunk size is 500 tokens with a 50-token overlap, the system will create approximately 11 chunks. Each chunk is indexed separately in your vector database. At query time, retrieval will operate at the chunk level, not the document level.

It is the equivalent of tearing off every page of a textbook and creating its fixed-sized clippings. As you can already imagine, this approach creates multiple issues. Firstly, you'll most likely clip something mid-sentence or mid-paragraph. This will make you lose critical context.

Secondly, this approach further increases conflict and data collision, as semantically similar information will appear true while being a part of two unrelated topics. For example, a clipping describing the anatomy of a lion's limbs can overlap with another clipping describing the muscle structure of an elephant's limbs. If clear markers are not present, the data can look contradictory and create undesirable results.

2. Semantic chunking

To fix the abovementioned problem, production systems rely on the second and more dynamic chunking strategy called Semantic Chunking. Instead of creating character-based chunks, this approach splits content based on meaning.

The technique uses AI models or programmable logic to scan the document and find logical breaks before splitting the data. This ensures that when an agent retrieves the information, it gets the complete context surrounding it. Depending on how it's implemented, each chunk may additionally contain metadata that further adds context to the chunked data.

A recent study published in PMC (PubMed Central) shows that adaptive indexing strategies achieve 87% accuracy in retrieval-augmented tasks, compared to 50% accuracy in fixed-size or standard chunking in answering complex queries, due to the loss of context in the latter.

Here are the two most critical engineering patterns used in semantic chunking:

a. Recursive Character Splitting

This approach is designed to keep related text together. Instead of chopping text at exactly at the 500th character, the system recursively tries to split by logical separators in a specific order.

For instance, the first pass for the system would be to look for double line breaks. This keeps entire paragraphs intact. A second pass, in case a paragraph is still too big, is to look for single line breaks or periods. And the last resort is to only split by spaces or characters if absolutely necessary.

b. Parent-Child Indexing

In this approach, we split the document into smaller chunks that are linked to their parent elements. This approach makes information highly modular without losing context of the surrounding text.

For instance, when the user asks about Admission Fees, the system retrieves the information. However, this retrieved chunk is also linked to the broader 'Admission' section, which contains the clause that mid-term admissions will involve higher fees. If the information wouldn't have been bound, this context would've been lost, leading to a bad user experience.

3. Structure-aware chunking

Structure-aware chunking focuses on the parsing stage. As the name suggests, it honors the overall visual layout of the document, intelligently identifying headers, titles, footers, tables, lists, columns, sections, figures, etc., to define boundaries or as its chunking mechanism.

For instance, it recognizes that a two-column PDF should be read down Column A, then down Column B, rather than reading across the page, which would cause mixup. Similarly, it can structure and chunk information based on H1, H2, H3, etc., thereby ensuring hierarchy and segmenting information appropriately.

Structure-aware chunking is extremely helpful in cases of technical documents and ensures the text is extracted in a logical reading order.

Reranking: The latency cost of precision

While modern LLMs boast massive context windows, their ability to use information within that context is not uniform. A well-known study from Stanford University and University of California, Berkeley, titled Lost in the Middle: How Language Models Use Long Contexts, identified a phenomenon known as “Lost in the Middle.”

The researchers observed that model performance often follows a U-shaped curve with respect to the position of relevant information in the prompt. LLMs tend to use information most effectively when it appears near the beginning or end of a long context, while information placed in the middle is somewhat less likely to influence the model’s answer.

In retrieval-augmented generation (RAG) systems, this positional sensitivity can matter. If many retrieved passages are inserted into the prompt, the order in which they appear can affect how effectively the model uses them. When the most relevant information ends up buried among many other chunks, it may be less likely to influence the model’s response than if it appeared earlier in the context.

So, reading the right data alone isn't enough. You need it ranked appropriately as well. Here, reranking ensures the system or the retrieval pipeline finds the right data in the right order.

Reranking becomes necessary in part because the first stage of retrieval is inherently approximate. Most RAG pipelines rely on vector search using databases such as Pinecone, Weaviate, or Milvus. These systems are designed for speed and semantic similarity, identifying the nearest neighbors to a query in embedding space.

However, mathematical similarity does not always correspond to true relevance for a specific task or business context. As a result, the top retrieved chunks may not always appear in the optimal order for the model to reason over them.

For instance, in a knowledge base of 100,000+ documents, a vector search for 'Project Alpha Q3 timeline' might also return the Q3 timeline for 'Project Beta' simply because the document structure, phrasing, and keywords are nearly identical.

If the agent blindly uses the top three results from a vector search, it risks contaminating its context window.

To solve this, production-grade RAG systems employ a strict two-step process.

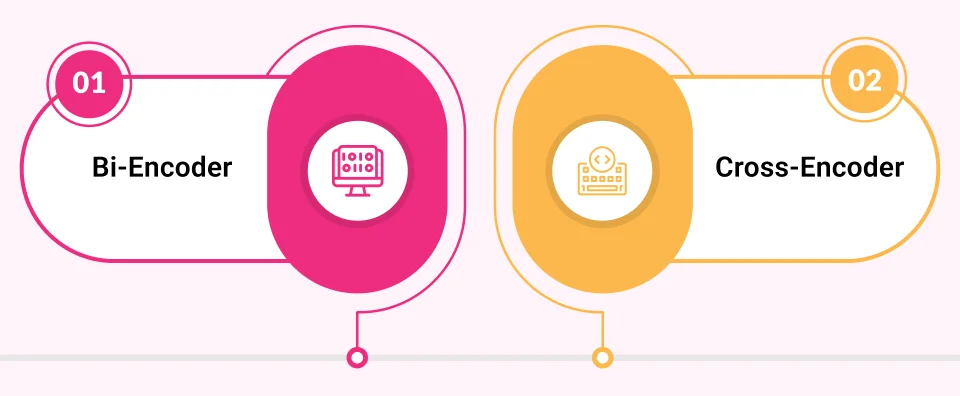

1. Bi-Encoder

A fast, lightweight vector search retrieves the top 50-100 potentially relevant chunks. That means it pulls many 'maybe relevant' items. This prioritizes speed and recall (casting a wide net). Without reranking, low-quality candidates consume search budget, increase hallucination risk, and inflate latency/cost downstream.

2. Cross-Encoder

A specialized reranking model rescoring the top 50 retrieved chunks evaluates each chunk jointly with the query. Unlike vector search, which compares similarity, the reranker processes the query and chunks together, enabling deeper interaction and a more precise relevance score. It then reorders the results accordingly.

The impact is statistically significant. Adding a reranking step yields double-digit percentage improvements in relevance metrics over first-stage retrieval alone. Benchmarks on MS MARCO and TREC Deep Learning show cross-encoder reranking can raise nDCG@10 by ~50-60% over sparse baselines. In practical terms, this means far fewer irrelevant chunks reach the LLM, thereby reducing hallucinations, improving answer accuracy, and materially increasing user trust without requiring a larger model.

However, a business trade-off of reranking is latency. It typically adds 100ms to 500ms per query, which is still manageable since it also ensures more precision. In the chatbot era, this was a friction point. In the agentic era, it is a safety premium.

If an agent is about to execute a refund transaction API call, saving 300ms is not worth a 20% drop in accuracy. The computational cost of reranking is negligible compared to the business cost of an autonomous agent acting on the wrong information. As Gartner reports, poor data quality and decision errors cost organizations an average of $12.9 million per year.

Freshness: Determining if relevance is still true

Finding the most relevant document is meaningless if that document is outdated.

Suppose your reranker successfully retrieves the shipping manifest with 99% semantic confidence. But if that manifest was updated in the ERP system 10 minutes ago and your vector database is still serving yesterday's version, your agent is now effectively acting on stale data.

Most RAG demos assume a static world. Ingest a PDF, and it stays true forever. However, in an enterprise, data is a living organism. And, a robust agent should know the difference.

Vector databases are snapshots. They do not automatically sync with your sources of truth like Salesforce, SharePoint, Jira, etc.

To counter the problem, the standard architectural choice for most teams is Daily Batch Indexing, especially for internal knowledge bases, compliance archives, and static document repositories. This is a set-it-and-forget-it script that runs once every 24 hours (usually during low-traffic hours like 2:00 AM) to scan for changes and update the vector database.

In high-stakes environments (like stock trading or live customer support), this lag is unacceptable, which is why production systems often move to two more real-time/ push-based models.

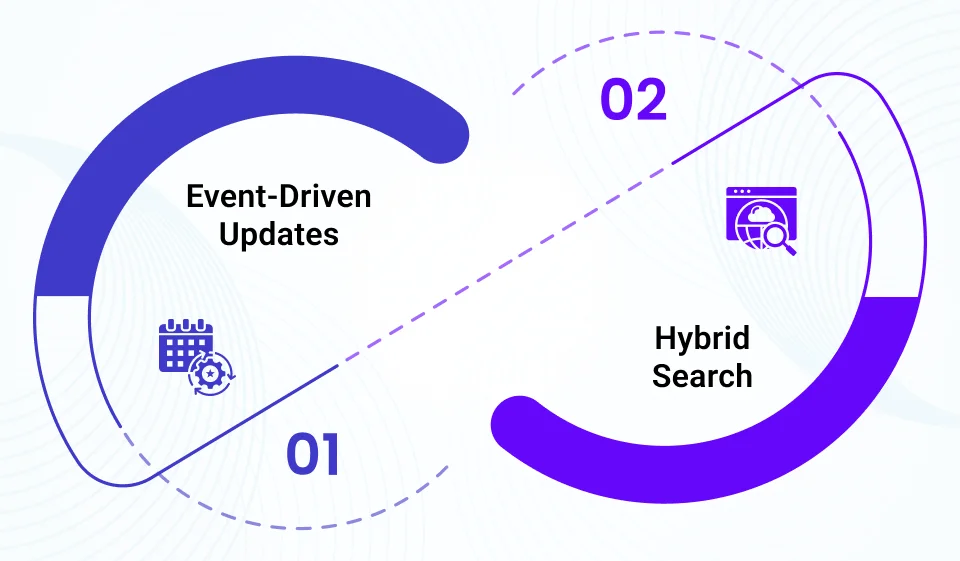

1. Event-driven updates

Instead of waiting for a nightly scan to find changes, the source system announces them immediately. It works by configuring the data source (e.g., Salesforce, SharePoint, or a CMS) to send a webhook whenever a record is saved.

This webhook triggers a specific microservice (like an AWS Lambda or Azure Function) that grabs only that one modified document, chunks it, embeds it, and updates the vector database in seconds. Hence, the time-to-live for stale data drops from 17 hours to seconds.

For highly volatile data, like inventory levels, ticket status, or account balances, vector search is the wrong tool entirely. Why? Because vectors are for knowledge (which changes slowly), not state (which changes instantly).

Vectors work best for policies, procedures, manuals, FAQs, and product documentation, and not for inventory counts, account balances, order status, and live prices.

A robust agent knows the difference between the two. It not only queries the vector database for the procedure "How do I process a return?" but also simultaneously hits the live ERP/SQL API to find the fact, "Is this order eligible for return?"

Here the agent effectively reads the manual and checks the dashboard before giving an answer, ensuring it never hallucinates a refund for an ineligible item.

Conclusion

RAG in production is not a prompt engineering problem. It is a systems engineering problem.

Chunking determines whether your agent sees complete thoughts. Reranking determines whether it sees the right thoughts first. Freshness determines whether those thoughts are still true.

When any one of these fails, the model looks confident while hallucinating.

Production-grade AI is less about making models smarter and more about building guardrails around what they are allowed to see.

Once your agent has reliable context, the next challenge is execution. How do you ensure it doesn't hallucinate? In our next guide, The Orchestrator Explained, we explore how agent decisions are controlled. Make sure to stay tuned and join our mailing list.

Frequently Asked Questions

Larger context windows reduce truncation, but they do not improve ranking quality or information correctness. If irrelevant or stale chunks are retrieved, the model will still generate confident but wrong answers. Retrieval precision matters more than raw context size.

Most production systems retrieve 20-100 candidates in the first pass and then narrow them down to the top 5-10 for generation. The goal is to balance recall (not missing relevant content) with precision (not overwhelming the model with noise). More chunks do not equal better answers. They often increase hallucination risk.

If your knowledge base is small, clean, and highly curated, first-stage vector retrieval may be sufficient. However, as content volume grows or phrasing becomes similar across documents, reranking significantly improves reliability. The need for reranking scales with content complexity, not just size.

Warning signs include incomplete answers, contradictory outputs, or frequent references to partial clauses. If policies or rules span sections and responses omit qualifiers or footnotes, your chunk boundaries may be splitting meaning. Retrieval errors often originate at preprocessing, not at the model layer.