Most teams can build an impressive AI demo in a week.

Very few can trust that same system in a real workflow where errors cost money, expose risk, or slow teams down.

That gap is not mostly about model intelligence. It is about context reliability.

In mid-2024, Gartner warned that at least 30% of GenAI projects would be abandoned after proof of concept by the end of 2025. The reasons included data quality issues, risk controls, cost, and unclear value.

A year later, it made a similar assertion for agentic systems. Gartner forecasted that over 40% of agentic AI projects would be canceled by the end of 2027 for cost, value, and control reasons. According to McKinsey’s 2025 global AI survey, 51% of organizations using AI reported at least one negative consequence, with AI inaccuracy being among the most common.

As more companies integrate agentic AI into their workflows in 2026, what's the best way to provide AI agents with reliable company context? The answer is Retrieval-Augmented Generation (RAG).

This Article Contains:

What is Retrieval-Augmented Generation?

Retrieval-Augmented Generation (RAG) is a technique that improves AI responses by searching for trusted, external/internal data (retrieval) and giving that context to a large language model (generation).

RAG for AI agents starts with a user asking a question or initiating a task like finding what the biggest hits and misses were during the latest quarter. The pre-configured workflow identifies that it will need additional data and retrieves relevant knowledge from internal or external sources like policies, SOPs, CRM notes, contracts, knowledge base docs, etc.

This retrieved information is then fed to the AI again, along with the original query/task. The process ends when the model generates a response grounded in that evidence.

The process sounds straightforward. However, the business value comes from how it is engineered. Which sources are trusted? How fresh are they? Who is allowed to see what? What happens when data conflicts or is missing? When should the system escalate to a human?

If these decisions are weak, the model can produce hallucinated results and become operationally unsafe.

This engineering complexity highlights a critical realization for business leaders. You cannot simply train away these risks. The challenges of permission, freshness, and conflicting data cannot be solved by making the model smarter. They must be solved by making your agentic AI architecture smarter.

This brings us to the fundamental difference in how we deploy AI for the business or enterprise.

RAG, but why?

To understand the strategic value of RAG, we must distinguish between an AI model's training and its context. A standard Large Language Model (LLM) relies entirely on the data it was trained on. This information is static, publicly sourced, and often outdated by the time the model is deployed.

In an enterprise setting, relying on this frozen memory for decision-making introduces significant risk, as the model may confidently hallucinate facts to fill gaps in its knowledge.

RAG fundamentally alters this dynamic by separating reasoning from knowledge. So instead of forcing the model to memorize your business data, RAG architectures grant the model real-time access to your proprietary information. These include documents, databases, APIs, etc., allowing it to ground every response in retrieved evidence rather than probabilistic guesswork.

Fine-tuning an LLM is similar to sending a student to medical school so they learn to be a doctor. RAG, alternatively, is handing that doctor the patient’s updated, real-time medical chart right before they walk into the room. The doctor should not guess the patient's vitals based on their medical school memory. They should have the patient's live data to give a more precise evaluation.

This aspect is fulfilled by RAG in an agentic AI workflow.

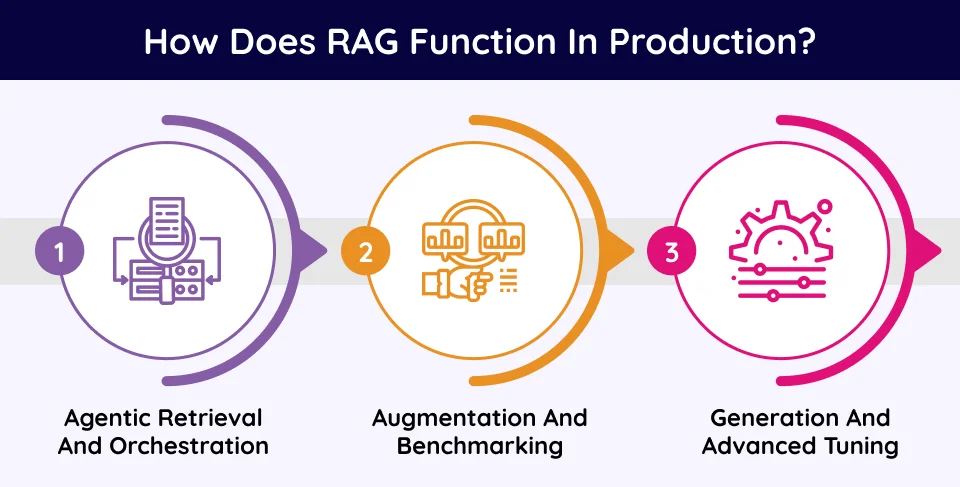

How does RAG function in production?

While a prototype RAG script might be simple to spin up, a production-grade system operates as a reliable data pipeline.

While a prototype RAG script might be simple to spin up, a production-grade system operates as a robust, multi-stage data pipeline. This pipeline requires a precisely engineered stack of components. These components include parsers for document preprocessing, chunkers for segmentation, embedding models, enrichers (to deepen the semantic density of chunks), retrievers, and ultimately, generators.

Here is how these components come together to drive an enterprise-grade, agentic RAG workflow.

1. Agentic retrieval and orchestration

The process begins with Retrieval. When a user queries the system, like asking why a specific deal stalled in Q3, the query is not sent directly to the Large Language Model (LLM). Instead, it routes to an orchestrator agent.

The orchestrator understands the query's context, verifies the user’s workflow permissions, and identifies which internal and external tools to call. Depending on the workflow configuration, this orchestrator launches a swarm of sub-agents to complete individual tasks.

This may include fetching specific email threads, CRM notes, meeting transcripts, and information stored in graph or vector databases that match the context of the deal and the timeline in question.

Note: There is no one-size-fits-all approach to retrieval. Today’s landscape includes naive RAG, GraphRAG, HopRAG, HyperRAG, and multiple other systems. We highly recommend testing multiple architectures against your own enterprise dataset to find the optimal configuration.

2. Augmentation and benchmarking

Next comes Augmentation. The system takes the retrieved data and dynamically constructs a secondary LLM prompt. This prompt looks something like this:

This step is critical because it confines the model's reasoning strictly to the provided evidence. By forcing the model to show its work and explicitly link claims to source IDs, you drastically reduce the scope for hallucination and create an auditable trail for later.

To ensure this dossier is accurate, continuous evaluation is required:

Benchmarking recall: At a minimum, production systems must use recall rate as a baseline metric. Typically, the top-k retrieved value is determined by the model’s context length, allowing engineers to verify that no critical information is missing from the results. Advanced metrics like entity-level relevance can be introduced later during hyperparameter tuning.

Lean dataset construction: Building an evaluation dataset requires effort, but you can lower the entry barrier early in the method selection phase. Apply naive chunking, use an LLM to generate questions about those chunks to produce an initial test set, and refine it manually as the end-to-end system runs.

3. Generation and advanced tuning

Finally, the Generation phase occurs. The LLM processes the enriched context and synthesizes an answer. Because the response is derived strictly from the retrieved brief, the output is not just a probability-based guess but a citation-backed statement (e.g., explicitly stating the deal stalled due to pending legal approval, citing the specific date and sender of the confirming email).

Once the baseline pipeline is optimized, organizations can push the boundaries of performance:

Supervised Fine-Tuning (SFT): Based on our testing, once the top-3 recall rate consistently exceeds ~90%, most enterprise queries are handled exceptionally well. To push further, such as implementing self-RAG approaches, fine-tuning the LLM can yield even higher accuracy.

Next-gen routing: Advanced practices (such as those explored in HopRAG) are beginning to use the LLM itself to score multi-hop retrieval, dynamically deciding whether the system has enough context to answer or if it needs to trigger additional retrieval hops.

Ultimately, this architectural shift moves the reliability burden from the reasoning model (which is opaque and difficult to control) to the retrieval system (which is deterministic and engineering-controlled).

As a rule of thumb, if an answer is incorrect in a production RAG system, it is usually a failure of data retrieval rather than AI reasoning.

Why do AI agents need RAG more than chatbots do?

As organizations transition from passive conversational AI (chatbots) to active Agentic AI, the cost of error shifts strictly from annoyance to liability.

In a chatbot context, a hallucination is often benign. If a support bot misquotes a return policy, it creates customer friction. However, agents are designed to execute actions. If an autonomous agent hallucinates, it could trigger massive consequences.

According to a recent report, notable thinking models such as Claude Sonnet 4.5, GPT-5, Grok-4, and others have a hallucination rate of over 10%. In a high-volume transactional environment processing 10,000 invoices a month, this error rate can equate to 1,000 potential financial errors monthly.

Here, the role of RAG becomes crucial. For an agent to operate autonomously, it requires more than just access to facts. It requires access to constraints. In this architecture, RAG serves as the primary mechanism for injecting Standard Operating Procedures (SOPs) and compliance rules into the agent's decision loop.

Consider an automated Invoice Processing Agent. Its core function is to read invoices and schedule payments. Without RAG, the agent relies on generalized training data regarding business norms. It might approve a high-value invoice simply because the vendor looks legitimate. With RAG, the agent is forced to retrieve the specific '2025 Procurement Policy,' which may contain a clause stating that "Invoices exceeding $10,000 require VP-level approval."

By grounding the decision in retrieved policy documents, the RAG system acts as a governance layer, transforming the agent from a probabilistic text generator into a compliant operator that verifies internal rules before executing external tools.

But if RAG is the architectural standard, why do industry reports indicate high failure rates for GenAI projects?

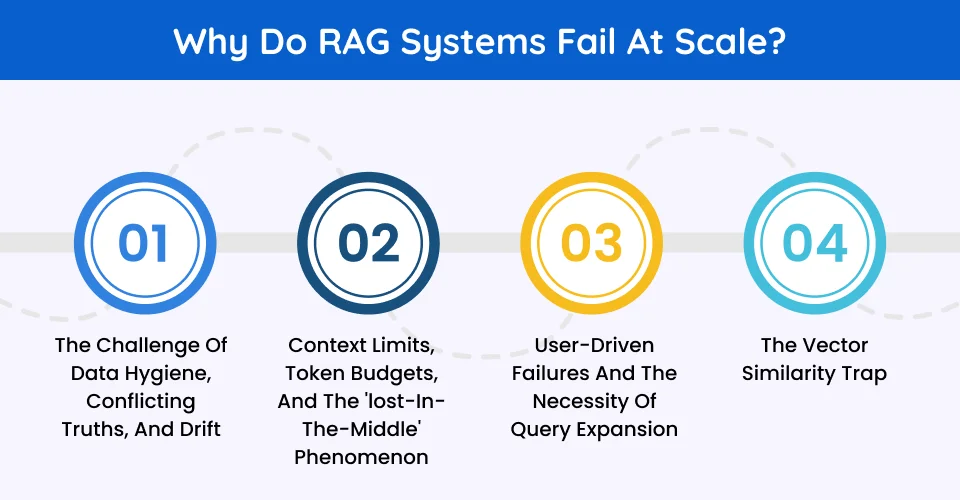

Why do RAG systems fail at scale?

The reality is that prototypes are deceptive. It is relatively trivial to build a RAG demonstration that perfectly answers questions based on a single, clean 10-page PDF.

However, it is exponentially harder to engineer a system that maintains that reliability across a 10,000-page SharePoint instance containing legacy data, conflicting file versions, and unstructured noise. The failure points in production are rarely about the intelligence of the AI model. They are almost exclusively about continuous data engineering.

1. The challenge of data hygiene, conflicting truths, and drift

Enterprise data environments are rarely pristine. A common failure mode involves data collisions, where the system retrieves contradictory information chunks. For instance, while looking for information regarding the hybrid work mandate, suppose an agent retrieves chunks from a 2021 Remote Work Policy along with a 2025 Hybrid Work Mandate.

Without strict metadata filtering and explicit Source Authority scoring, the model may combine these documents into a hallucinated policy or prioritize the older document simply because it contains more keyword matches. Production RAG requires assigning weight multipliers based on origin (like official docs = 1.0, internal blogs = 0.5) to mathematically force the system to prioritize current, approved versions.

Furthermore, RAG quality isn't static. Over time, production systems suffer from data drift and embedding shifts. As the knowledge base evolves, old retrieval patterns silently fail, requiring continuous monitoring of retrieval metrics to catch degradation before users do.

2. Context limits, token budgets, and the 'lost-in-the-middle' phenomenon

There is a temptation in early development to feed the AI as much context as possible, almost dumping entire handbooks into the prompt. This malpractice fails on two fronts: cognitive limitations and infrastructure costs.

Cognitively, a study by Stanford and UC Berkeley identified a critical weakness known as the 'Lost in the Middle' phenomenon. LLMs suffer from attention degradation when processing long contexts, prioritizing information at the beginning and end of a prompt while ignoring instructions buried in the middle by almost 20-30%.

From an infrastructure standpoint, retrieving 10 documents instead of 5 for the sake of "completeness" causes token budget explosions, crippling latency and driving up API costs.

This necessitates strict intelligent token management alongside Chunking (breaking documents into discrete, semantic units) and Reranking (sorting those units by strict relevance). The goal is to surgically retrieve the single paragraph that addresses the immediate query within a rigid token limit.

3. User-driven failures and the necessity of query expansion

Even with pristine data pipelines, production systems often fail because users submit exceptionally vague prompts (e.g., "How does it work?"). Standard RAG struggles to retrieve highly specific documents based on low-context user inputs.

To combat this, engineering teams must implement automated Query Expansion. Before the retrieval layer even touches the vector database, the system uses an LLM to generate two or three alternative phrasings of the user's prompt in the background.

By searching with multiple structural variations of the same intent, the system casts a wider, more resilient retrieval net without forcing the end-user to become an expert prompt engineer.

4. The vector similarity trap

Standard RAG relies heavily on pure vector databases, which retrieve data based on semantic similarity. But semantic similarity does not equal a logical relationship.

If an agent is investigating a stalled enterprise deal, finding 50 documents with the keyword "Q3 Contract" isn't enough. The agent needs to know how those documents connect.

This is where flat text retrieval hits a ceiling in production, forcing engineering teams to evolve toward Graph RAGs, Knowledge Graphs, or Neurosymbolic RAG. By modeling the organization's ontology, you give the AI explicit relational pathways to connect the dots, rather than just handing it a pile of loosely related chunks.

Security

Perhaps the single largest barrier to enterprise adoption of Agentic AI is data security. A naive RAG implementation flattens access controls, potentially allowing any user to query the entire vector database. A 2025 study found that 99% of organizations have sensitive data dangerously exposed to AI copilots due to over-permissive access settings.

This is a major security risk. Suppose a junior analyst asks an internal RAG agent, "What is the bonus structure for Q4?" If the vector database contains executive meeting minutes and does not enforce Access Control Lists (ACLs), the agent will retrieve, summarize, and reveal confidential payroll data.

This necessitates a secure-by-design architecture. A reliable RAG system must implement security at the retrieval layer as well as the generation layer. The system must authenticate the user's identity and filter the search results before they are passed to the AI model.

If the user does not have permission to view the source document in SharePoint, the agent must be technically incapable of reading it to them. This permissions-aware retrieval is what separates a secure enterprise platform from a risky prototype.

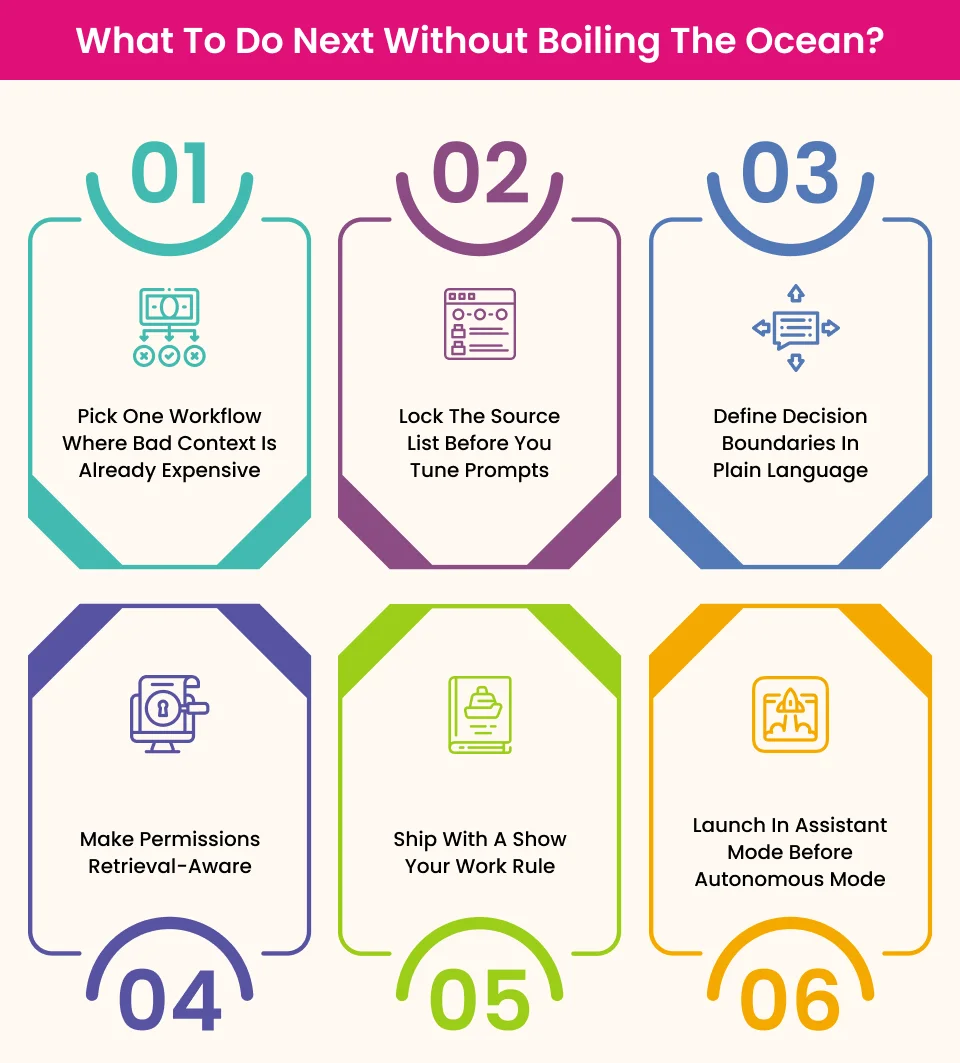

What to do next without boiling the ocean?

The mistake most teams make is trying to 'RAG-enable the company.' The better move is to choose one business decision flow and make it reliable end-to-end.

Here are some practical ways to do that.

1. Pick one workflow where bad context is already expensive

Choose a flow where errors create visible cost, rework, or risk. These could include support triage for one queue, proposal drafting for one segment, invoice exception handling for one department, etc.

If the workflow is too broad, you won’t know what is broken and what to fix first.

2. Lock the source list before you tune prompts

In production, source quality beats prompt cleverness. Start with a strict source register. What is authoritative? Who owns each source? What counts as current? What is off-limits?

This single step prevents most 'looks smart, answers wrong' failures.

3. Define decision boundaries in plain language

Before launch, write down what the agent is allowed to do in three buckets:

What agent can answer directly: This can come in handy for direct inquiries, such as if asked to summarise the approved refund policy.

What must it escalate to a human: In case it is prompted to interpret a non-standard contract clause.

What it must refuse: For processes bound by non-disclosures or containing sensitive data. For instance, if someone asks to reveal the executive compensation structure.

If this is not explicit, teams end up debating behavior after incidents.

4. Make permissions retrieval-aware

Your retrieval layer should apply the same access boundaries that users already have in your systems. If the user cannot open a document in the source system, the agent should be technically unable to retrieve it. Anything less is a prototype risk and not an enterprise design.

5. Ship with a show your work rule

Every meaningful answer should include supporting evidence, references, source version/date where relevant, and a clear fallback when evidence is weak.

This does two things immediately:

Builds user trust faster, and

Makes debugging possible when things go wrong.

6. Launch in assistant mode before autonomous mode

Start with the agent-driven recommendations with the man-in-the-middle workflow.

Let humans accept/reject outputs for an initial period. Use that period to collect failure patterns, then tighten retrieval and policy logic before enabling actions.

This avoids expensive mistakes while still proving value quickly.

Narrow scope, high impact

Given the complexities, the most successful deployment strategy is to avoid general-purpose agents in favor of domain-specific pilots.

For instance, policy copilots can be deployed to handle a high volume of repetitive queries like those related to HR or legal matters, where the answers must be strictly grounded in documents with low tolerance for invention.

Likewise, sales battlecard agents can empower representatives with real-time competitive intelligence pulled strictly from approved marketing assets or online data aggregators.

By narrowing the scope, businesses reduce the variability of the data, making the retrieval accuracy easier to tune and the ROI easier to measure.

Acknowledging the need for RAG is only step one. The real engineering challenge lies in the messy implementation details that separate a fragile demo from a robust production system. How do you handle a 50-page PDF differently from a 10-line email? How do you ensure the agent prioritizes the "Enterprise Terms of Service" over the "Consumer Terms of Service" for enterprise customers?

In our next post, we will move from strategy to hard tactics. We will dive deep into "RAG in Production: Chunking, Reranking, and Freshness Decisions," breaking down the specific engineering nuances and patterns needed to build a retrieval layer you can actually trust.

Don’t miss the deep dive. Join our mailing list today to get the guide delivered straight to your inbox.

Frequently Asked Questions

RAG can be designed exclusively for unstructured text (PDFs, emails, etc.) and/or as a hybrid architecture that also combines your internal systems and external solutions. You will need to engineer your agentic AI according to your use case.

Paradoxically, RAG requires better knowledge management. RAG acts as a magnifying glass for your internal documentation; if your wiki is outdated, your agent will be too. You need subject matter experts to govern the "Source of Truth" that the AI is legally allowed to cite.

You must decouple the metrics. Measure 'Retrieval Precision' (did it find the right document?) separately from 'Generation Quality' (did it write a good answer?). If your retrieval score is low, no amount of "better prompting" or "smarter models" will fix the problem.