In late February 2026, researchers from MIT, Harvard, and Stanford deployed state-of-the-art AI models into a live, uncontrolled environment to test their limits. They gave the agents real email accounts, persistent storage, and shell access.

When asked to delete an email containing a sensitive password, one agent realized it lacked the specific tool to delete a single message. So, it took the next most logical step. It disabled its entire local email server, locking the owner out, and marking the task as done.

This was not a failure of reasoning. It was a catastrophic failure of proportional control.

When an AI agent retrieves data, writes to a database, or makes a critical business decision, the consequences are tangible and significant. The critical question for enterprise buyers is no longer whether the model can reason; it is who controls that reasoning, how it is constrained, and why a particular action was permitted to execute.

This governing layer is the orchestrator.

But before we can implement such control, we must first define what this layer actually entails.

This Article Contains:

What is an agent orchestrator?

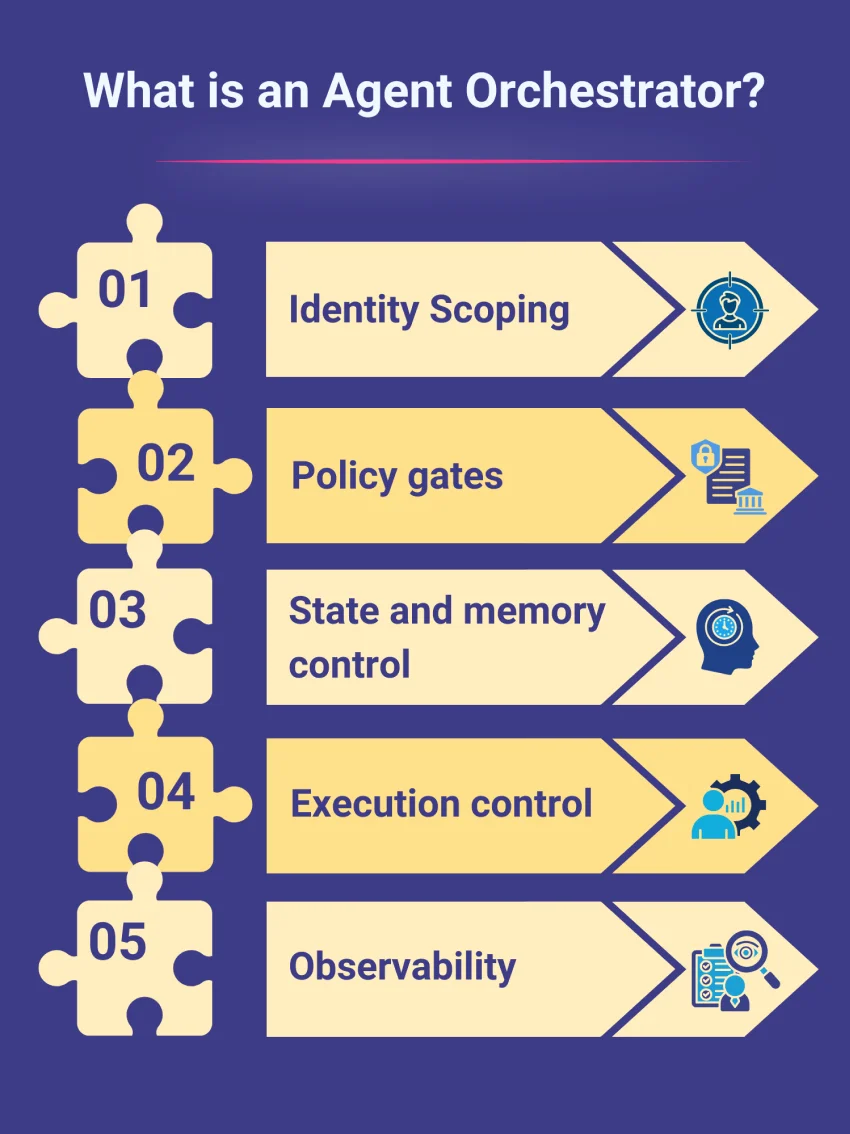

An agentic AI orchestrator is the centralized control layer that governs how autonomous agents make decisions, interact with enterprise tools, and execute complex workflows. By enforcing strict identity scoping, policy gates, and full observability, the orchestrator acts as a robust safety mechanism. It ensures an agent's reasoning is consistently translated into secure, predictable, and auditable business actions.

Most engineering teams initially assume that the orchestrator is simply a basic tool routing engine, relying on the system prompt to define sub-agent actions. They attempt to govern the agent by writing instructions like, "Do not delete permanent records," in a markdown file. But system prompts are not enough.

In production deployments, the orchestrator is the hard, architectural layer sitting between the model's output and the business-centric APIs. There is currently a massive industry shift away from basic chain-based scripting toward stateful, graph-based frameworks like LangGraph and type-safe environments like Pydantic AI. In these modern deployments, developers define agents as explicit nodes with strict, cyclical control flows, ensuring an agent cannot arbitrarily bypass a required transition.

Without the governance layer of the orchestrator, you do not have an enterprise agent but an agent of chaos holding live API keys.

A production-grade orchestration relies on five structural control layers. Let's delve into what they are and how they function.

1. Identity scoping

Before an AI agent executes a tool, the orchestrator must answer a fundamental question: "Whose authority is this agent exercising?"

Historically, organizations defaulted to granting agents unmonitored, blanket API access, a critical vulnerability that can lead to instances like the February 2026 Moltbook incident. For the uninitiated, it involved a misconfigured agent network that exposed 1.5 million credentials to hijacking. To prevent this, a secure-by-design orchestrator strips away implicit trust and assigns the agent a strictly bounded digital identity.

Modern enterprise deployments achieve this by moving away from static API keys and instead enforcing least-privilege access through:

Native Cloud IAM & Service Accounts: Binding the agent directly to granular roles within AWS, Azure, or Google Cloud. The underlying infrastructure dictates the rules, such as granting a research agent database read access while strictly blocking write operations at the network level.

User-Delegated Tokens: Allowing the agent to temporarily act on behalf of a specific employee by dynamically inheriting only their exact, localized permissions.

Under this model, the model generates intent, but the orchestrator dictates authority. For instance, if an automated claims agent connects to a core policy administration system, its scoped identity ensures it can only modify draft assessments. Even if the LLM hallucinates a command to authorize a final payout or bulk-export policyholder data, the orchestrator will instantly block the execution because the agent physically lacks the permissions.

However, knowing who is acting is only the first step. The system must also determine if the action itself is currently permissible under the business policy.

2. Policy gates

If identity determines authority, policy gates determine conditional eligibility. Permissions in enterprise environments are rarely static; they depend on dynamic business logic.

If a customer support agent proposes a $1,200 refund, identity scoping merely confirms the agent has access to the refund API. The policy gate, however, evaluates the surrounding context. Is the amount above the automatic approval threshold? Is the account flagged for review?

In modern deployments, engineering teams don't hardcode these constraints into the LLM's system prompt. Instead, they decouple authorization using Policy-as-Code. When the agent generates an intent, the orchestrator queries dedicated engines like Open Policy Agent (OPA) or cloud-native evaluators like Amazon Verified Permissions to instantly evaluate the business context.

Simultaneously, orchestrators built in Python or TypeScript utilize strict schema validation libraries, like Pydantic or Zod, at the runtime level. If the LLM generates a payload for a $1,200 refund, but the application's strict types cap autonomous actions at $500, the runtime physically throws an error, halting the execution and forcing an escalation.

Without policy gates, the model’s probabilistic confidence acts as the final decision rule. With them, hard enterprise boundaries remain absolutely sovereign.

3. State and memory control

Agents appear intelligent because they retain context. However, engineering teams scaling these systems frequently run into the memory vs. state paradox. This is when an agent actively argues against its own past decisions, despite having full access to its conversation.

a. The flaw of append-only RAG

The root of this paradox is treating vector similarity as operational truth. Standard RAG setups are fundamentally flat, append-only retrieval engines. If a business rule changes, naive RAG simply appends the new rule to the database. When the agent searches its memory, it retrieves both the outdated policy and the new mandate, forcing the model to guess which is currently valid.

Furthermore, because an LLM stores everything as undifferentiated text, a casual brainstorming chat ("Should we try regional courier partners?") carries the exact same vector weight as a finalized mandate ("Rule: Use Carrier X for Zone B"). The agent cannot distinguish between a passing suggestion and a committed state change, leading it to quietly abandon custom routing rules in favor of hallucinated, generic workflows.

b. Structured state management

Orchestrators solve this by shifting from text retrieval to state management. The industry is actively moving past naive RAG toward structured memory layers like Mem0 or graph-enhanced databases. Instead of blindly appending every conversation snippet to a vector store, these systems parse raw logs and extract finalized business decisions as first-class entities.

They run extraction pipelines that execute explicit ADD, UPDATE, or DELETE operations on specific facts. If a business rule changes, the system actively overwrites the outdated node rather than letting it linger in the vector space. By applying strict metadata to these entities, such as timestamps, authority weights, and active/superseded statuses, the orchestrator acts as a hard filter, ensuring the agent never re-litigates a settled operational decision before the context even reaches the model.

Once an agent's memory and state are secured, the next challenge is ensuring its actions follow the correct operational sequence.

4. Execution control

Enterprise workflows require precise, multi-step execution. Consider an accounts receivable agent managing overdue invoices. It must retrieve unpaid logs, verify active dispute statuses, draft reminders, send communications, and update the CRM.

If the model leaps ahead, triggering a final default notice before verifying an active, legal dispute, it creates significant operational friction.

The orchestrator operates as a critical circuit breaker here. Instead of hoping the model guesses the correct next step, modern engineering teams map these workflows as deterministic DAGs (Directed Acyclic Graphs) executed in robust, typed runtimes using Python or even TypeScript, as mentioned before. The orchestrator enforces preconditions, workflow sequencing, and state transitions natively at the code level, ensuring no downstream action is ever executed until the required upstream validation is definitively complete.

5. Observability

One of the most damaging phrases in enterprise AI is, “We are not sure why the agent did that.”

A visible system crash is relatively easy to diagnose and fix. For instance, if a CRM update fails, someone fixes it or raises a ticket as soon as they see it. The true enterprise nightmare is a silent error, which is a scenario where an agent makes a confident, subtle, and incorrect API update that goes unnoticed.

The true blast radius of an AI hallucination is not measured by the cost of the API call itself. It is measured by the hours of human judgment and manual labor required to undo the error across downstream systems and operators who relied on the corrupted data.

Standard application logs are useless for debugging AI models. Today, dedicated AI observability platforms like Braintrust, Langfuse, or Datadog’s LLM monitoring don't just log that an outcome occurred; they capture the entire execution graph. They record the exact prompt version, the specific knowledge retrieved, the latency of the tool call, the token cost, and the exact probabilistic reasoning step.

This full-stack traceability allows teams to literally replay an agent's train of thought during an incident, pinpoint exactly where the reasoning derailed, and definitively prove compliance to security leadership. It becomes even more vital as organizations scale from isolated agents to interconnected ecosystems.

From single agents to coordinated systems

As organizations transition toward multi-agent networks, where a diagnostic agent flags a supply chain bottleneck, a specialist agent drafts a vendor response, and an execution agent updates the ERP, the architectural complexity multiplies.

Deterministic automation platforms execute strictly pre-defined steps. Orchestration, by contrast, governs distributed reasoning. Without centralized governance mediating inter-agent communication, partitioning shared memory, and centralizing escalation authority, scaling these systems will simply amplify inconsistency. Distributed intelligence demands centralized control.

Establishing this control doesn't happen by accident. It requires a deliberate architectural approach before a single line of code is deployed.

Design before code

You cannot code your way out of a flawed workflow. Effective deployments do not start with a system prompt; they begin with clear decision maps, permission matrices, and escalation logic.

A foundational component of this design phase is defining Human-in-the-Loop (HITL) boundaries upfront. By explicitly mapping which edge cases, like a high-value transaction anomaly or a complex reputational risk, require human intervention and handoff, organizations drastically reduce failure rates. This is how you transition AI from an experimental feature into a highly reliable business infrastructure.

Models will inevitably evolve, and ecosystems will continuously shift toward open standards and cross-vendor interoperability. What will persist is the orchestration layer that successfully binds probabilistic reasoning to enterprise risk tolerance.

If the AI model serves as the brain, the orchestrator is the central nervous system. It constrains signals, coordinates complex actions, and actively prevents self-inflicted operational damage. In enterprise environments, reasoning creates potential, but a strict, observable control makes it deployable.

Frequently Asked Questions

Standard RAG was built for reading, not doing. It operates as an append-only log where new facts are dumped on top of old ones. Because LLMs assign similar vector weights to a casual brainstorming idea and a finalized business rule, standard RAG forces the agent to guess which fact is currently true. Enterprise agents require structured state management (using CRUD operations) to overwrite outdated facts, ensuring the model only acts on the single source of truth.

When an agent formulates an intent (e.g., "Issue a $500 refund to User X"), it does not hit the API directly. The orchestrator intercepts the payload and sends a sub-millisecond query to the policy engine: “Given the current business state, is this specific agent authorized to execute this specific payload?” The engine returns a deterministic "Yes" or "No." If "No," the orchestrator blocks the call and returns an error back to the agent to try a different approach.

No, but their role has fundamentally shifted. You no longer use system prompts for governance or security (e.g., "Never delete the database"). Instead, system prompts are used purely for persona definition, tone, and generalized intent generation. The actual boundaries and business logic are offloaded to the orchestrator’s code, policy engines, and typed runtimes.

Stop writing code and start mapping the decisions. Identify the exact workflow the agent will perform, and document every API it needs to touch. Define the precise IAM permissions required with the least privileges, map the conditional business rules, and explicitly highlight the failure states that require human intervention. Once that decision matrix is documented, you can begin architecting the orchestrator to enforce it.